Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

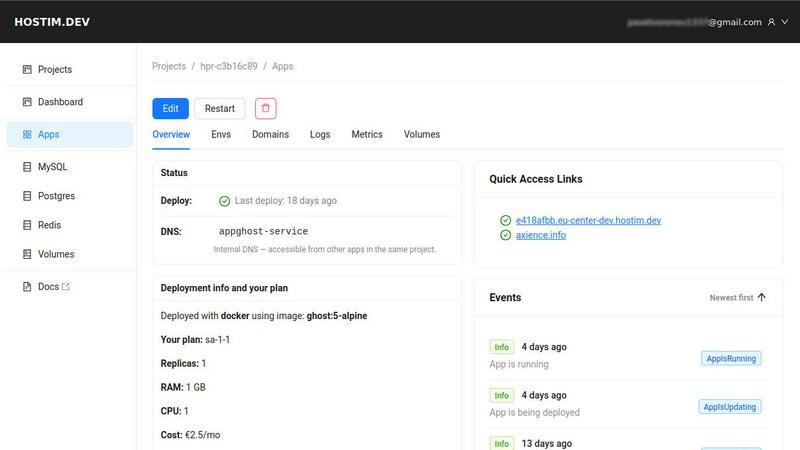

Hostim.dev

Hostim.dev is your no-brainer Docker hosting in the EU with databases included, so you can ship fast and chill.

Last updated: March 1, 2026

Stop guessing which AI model slaps for your task, just describe it and we'll benchmark 100+ models for you in minutes, no API keys needed.

Last updated: March 26, 2026

Visual Comparison

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Deploy with Docker, Git, or Compose

Skip the ceremony. You can launch your app by simply pasting a Docker Compose file, pointing to a Git repo, or dropping a Docker image. It's the ultimate "just deploy it" button. No need to write complex Kubernetes manifests or cloud formation templates. If your app runs in Docker, it runs here, period. Get from zero to live in literal minutes.

Built-in Databases & Volumes

No more hunting for separate database hosting. The moment you need a MySQL, PostgreSQL, or Redis instance, you can spin one up instantly right from the Hostim dashboard. They come pre-wired to your app with environment variables, so everything just connects. Persistent storage volumes? Also included. It's like having a full stack on tap.

EU Bare-Metal & GDPR Compliance

Your data chills in Germany, on physical servers (bare-metal), not in some random virtual cloud bucket. This means top-tier performance and, crucially, automatic GDPR compliance. For you and your EU clients, this is a massive win—no legal headaches about data residency. It's ethical, fast hosting without the big cloud lock-in.

Per-Project Isolation & Billing

Every project you create lives in its own completely isolated environment (a Kubernetes namespace under the hood). This is perfect for security and for managing multiple client apps. Even better, each project has its own cost tracking. You can see exactly what each app costs, making client billing or budget tracking stupidly simple.

OpenMark AI

Plain Language Task Wizard

Forget writing complex code or JSON configs. You just type out what you want the AI to do, like "extract the invoice total and due date from this messy email" or "write a chill marketing tweet for this new feature." OpenMark's wizard takes your vibe and builds the benchmark. It's the ultimate "explain it to me like I'm five" but for setting up professional-grade LLM tests. No PhD in prompt engineering required.

Real API Cost & Latency Showdown

This ain't about theoretical token prices on a spec sheet. OpenMark makes real API calls to every model and shows you the actual receipt—how much that specific request cost and how long it actually took to come back. You can instantly spot the models that give you 95% of the quality for 50% of the price, or the ones that are weirdly slow. It's all about cost efficiency, not just raw cheapness.

Variance & Consistency Scoring

Any model can have a one-hit-wonder output. OpenMark runs your task multiple times for each model to see the variance. You get to see if Model A nails it 9 times out of 10, or if Model B is a complete wildcard that gives you genius one minute and gibberish the next. This stability check is crucial for shipping something you can actually trust in production, not just a cool demo.

Hosted Benchmarking (No Key Drama)

The biggest flex? You don't need to set up individual API keys for OpenAI, Anthropic, Google, etc., just to compare them. You buy OpenMark credits and it handles all the backend API calls across its massive model catalog. It removes the setup hell and lets you focus purely on the results. It's like having a universal remote for every AI model out there.

Use Cases

Hostim.dev

The Solo Developer / Freelancer

You're building projects for clients or your own SaaS. You need to deploy fast, hand over a clean, running app to the client, and not get stuck managing servers. Hostim lets you deploy from your stack, gives you isolated projects per client, and provides clear billing for each. Ship faster, look more pro.

The Startup or Small Team

You're moving fast and need to iterate quickly. You don't have a dedicated DevOps person, and cloud setup is a time-sink. With Hostim, your backend team can deploy their Dockerized microservices or monoliths instantly, with databases ready to go. Scale resources from the UI as you grow, all with predictable EU pricing.

The Digital Agency

Managing hosting for multiple clients on traditional cloud platforms is a billing and access nightmare. Hostim solves this with strict per-project isolation. Each client's app is separate and secure. You get a clear cost breakdown per project to invoice accurately, and everything is hosted in the EU, which clients love.

The Student or Learner

You want to learn real-world deployment with Docker, databases, and APIs on actual infrastructure, not just localhost. Hostim's free trial and student credits let you deploy real projects you can add to your portfolio. Learn the full lifecycle without the sysadmin pain or scary bills.

OpenMark AI

Pre-Launch Model Selection

You're about to bake an LLM into your app's new support chatbot. Do you go with GPT-4o, Claude 3.5 Sonnet, or a fine-tuned Llama? Instead of debating in Slack, create a benchmark with real user query examples. Run it. In minutes, you'll have data on which model understands your domain best, responds fastest, and keeps your API bill from being absolutely unhinged.

Validating Cost-Efficiency for a Workflow

Your data extraction pipeline uses an expensive top-tier model for every single document. Is that overkill? Use OpenMark to test your extraction prompts against cheaper, smaller models. You might find one that's just as accurate for simple forms, letting you save the big guns for only the complex cases and slashing your monthly costs dramatically.

Checking Output Consistency for Agents

Building a multi-agent system? You need to know if your "reasoning" agent is consistently logical, not just occasionally brilliant. Benchmark the same reasoning task 20 times. OpenMark's variance charts will show you if the agent's output is stable or all over the place, preventing a production nightmare where your agent randomly decides 2+2=5.

Comparing New Model Releases

A new model drops every Tuesday. Does it live up to the marketing for your tasks? Don't just read the blog post. Quickly clone an existing benchmark task in OpenMark, add the new hotness to the lineup, and run a head-to-head. See if it's actually worth switching your integration over to, based on your own real-world criteria.

Overview

About Hostim.dev

Alright, devs, gather 'round. Ever feel like launching your app is a whole-ass side quest involving YAML incantations, cloud console nightmares, and bills that hit like a surprise plot twist? Hostim.dev is here to yeet that complexity into the sun. Think of it as your chill, bare-metal PaaS wingman based in Germany. It's built for anyone who just wants to ship their containerized apps (Docker images, Git repos, or full-blown Docker Compose files) without getting a PhD in DevOps. It automatically hooks you up with the essentials—like MySQL, PostgreSQL, Redis, and storage—so you're not juggling ten different services. Every project gets its own isolated Kubernetes namespace (they handle the K8s, you get the peace of mind). It's hosted in the EU, so it's GDPR-compliant by default, no sketchy data locations. The pricing is transparent and hourly, starting from a low-key €2.5/month, so you can actually predict your costs. Whether you're a solo builder, a startup trying to move fast, or an agency managing multiple client projects, Hostim.dev is designed to get you from code to live app in minutes, not days.

About OpenMark AI

Alright, let's cut through the AI hype. You're building something cool, you need a brainy LLM to power it, and you're staring down a list of 100+ models like it's a Netflix menu with nothing good. Which one actually works for your thing? Which won't cost an arm and a leg? And will it flake out on you after one good response? That's the chaos OpenMark AI fixes. It's your personal AI model testing arena. You just describe your task in plain English (or any language, really), hit go, and it runs that exact prompt against a ton of different models—GPTs, Claude, Gemini, open-source stuff, you name it—all at once. No juggling a million API keys, no coding a bespoke testing suite. You get back a side-by-side breakdown of who's the real MVP, based on actual cost per API call, speed, scored quality, and—this is the kicker—consistency across multiple runs. So you see if a model is reliably smart or just got lucky once. It's built for devs and product teams who are done guessing and need hard data before they ship. Think of it as due diligence for your AI feature, so you don't end up picking the flashy model that totally bombs on your specific use case.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

You get a full 5-day trial project with no credit card required. You can deploy any Docker image, Git repo, or Compose file and test drive all the features, including the built-in databases (which have their own free tiers). It's a proper, no-strings-attached sandbox to see if the vibe is right for you.

Can I deploy with just a Compose file?

Absolutely! That's one of the main flexes. Just copy-paste your docker-compose.yml into the Hostim dashboard, and it'll spin up all your services, networks, and volumes. No need to break it down or rewrite it for their platform. It's the ultimate simplicity hack.

Do I need to know Kubernetes?

Nope, not at all. That's the whole point. Hostim uses Kubernetes on the backend to make everything robust and isolated, but you never have to touch it, write a YAML file, or even know what a pod is. You manage your apps through their simple UI. They handle the K8s chaos.

Is this for solo devs or teams?

Both! Solo devs love the speed and simplicity. Teams and agencies love the project isolation and clear per-project cost tracking, which makes collaboration and client handovers clean. You can manage everything from a single account, making it scalable for any size.

OpenMark AI FAQ

Do I need my own API keys to use OpenMark?

Nope, that's the whole vibe! You use OpenMark credits. We handle all the API calls to the different model providers (OpenAI, Anthropic, Google, etc.) on our backend. You just describe your task, pick models from our catalog, and run the benchmark. No key management, no separate bills, no setup friction.

How is this different from reading benchmark leaderboards?

Those public leaderboards test models on generic tasks like trivia or math. OpenMark is for your specific, unique task. It's the difference between reading a car's top speed and actually test-driving it on your commute route. You get results based on your actual prompts, your data, and your definition of "good."

What kind of tasks can I benchmark?

Pretty much anything you'd use an LLM for! Common ones are classification, translation, data extraction, Q&A, summarization, creative writing, code generation, and testing RAG pipelines. If you can describe it, you can probably benchmark it. The platform is built for real-world, task-level testing.

How does the scoring and "variance" thing work?

When you run a benchmark, we execute your prompt multiple times for each model (configurable). We then score each output based on your task's goal. The results show you the average score, but more importantly, they show the spread—like a distribution chart. A tight cluster means the model is consistent. A wide spread means it's unpredictable, which is a huge red flag for production use.

Alternatives

Hostim.dev Alternatives

Yo, so you've heard about Hostim.dev, the slick EU-based PaaS that's basically a cheat code for deploying Docker and Git apps. It's that all-in-one platform that gets your projects live with built-in databases and zero DevOps headaches. But hey, maybe you're shopping around. That's totally normal. People look for other options for all sorts of reasons—maybe they need a different price point, specific features Hostim doesn't have, or servers in another part of the world. When you're on the hunt for a different platform, you gotta know what's important to you. Think about your budget, the tech stack you're using, and where you want your data to live. Do you need more advanced scaling options, or is dead-simple setup your top priority? Getting clear on your non-negotiables will help you cut through the noise and find the right fit. ---

FAQ_SEPARATOR---

[

{"question": "What is Hostim.dev?", "answer": "Hostim.dev is a bare-metal Platform-as-a-Service (PaaS) that makes deploying Docker and Git apps super simple, with built-in databases and flat pricing, all hosted in Germany."},

{"question": "Who is Hostim.dev for?", "answer": "It's perfect for solo developers, startups, or agencies who want to launch containerized apps fast without dealing with complex DevOps setups."},

{"question": "Is Hostim.dev free?", "answer": "Hostim.dev uses transparent hourly billing, so it's not a free tier service, but you only pay for what you use."},

{"question": "Why choose Hostim.dev?", "answer": "You get one-click deployments, built-in databases, automatic HTTPS, real-time monitoring, and GDPR-compliant EU hosting for peace of mind."}

]

OpenMark AI Alternatives

So you're checking out OpenMark AI, the slick web app that lets you pit a hundred-plus LLMs against your specific task to see who's actually worth the API call. It's a dev tool built for the crucial pre-launch hustle, giving you the real tea on cost, speed, quality, and consistency before you commit code. People scope out alternatives for all the usual reasons. Maybe the pricing model doesn't vibe with your current workflow, or you need a feature that's still on the roadmap. Sometimes you just prefer a different interface or need it to play nicer with your existing tech stack. When you're shopping around, keep your eyes on the prize. You want something that gives you actual, unfiltered results from real API calls, not marketing fluff. The whole point is to nail down the best bang-for-your-buck model for your exact use case, so prioritize tools that deliver transparent, actionable data on performance and stability.