Lovalingo vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

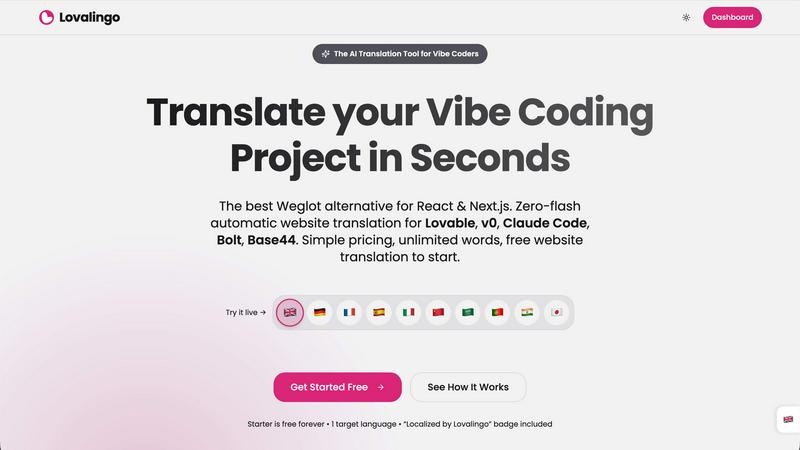

Lovalingo

Instantly translate your React app in one prompt, no JSON files or flash.

Last updated: February 28, 2026

Stop guessing which AI model slaps for your task, just describe it and we'll benchmark 100+ models for you in minutes, no API keys needed.

Last updated: March 26, 2026

Visual Comparison

Lovalingo

OpenMark AI

Feature Comparison

Lovalingo

Zero-Flash, Render-Native Translation

Forget those janky script-based tools like Weglot that hack your DOM after the page loads, causing ugly flickers and layout shifts. That's amateur hour. Lovalingo integrates directly into your React render cycle. The translation happens before the page paints, meaning your users see the correct language instantly with a perfectly stable layout. It's smooth, native, and performs like it's part of your own codebase. No flash, no jump, just pure, clean multilingual vibes.

One-Prompt AI Setup

Configuring traditional i18n is a vibe-killer. Lovalingo respects your flow. You copy a single, clean prompt, paste it into Lovable, Claude, or your AI tool of choice, and you're done. Your AI assistant will install the NPM package and wrap your app. In 60 seconds flat, you go from mono-lingual to multilingual. It's the setup experience you deserve - no dashboard labyrinths, no confusing configs, just instant global readiness.

Automatic Translation & Zero Maintenance

The dream: no translation files. Ever. Lovalingo makes it real. We auto-detect the text in your app and translate it on the fly. You add a new feature or page? We handle it. No more maintaining 3,500 JSON string entries for 5 languages. It's all automatic, running in the background while you focus on building. This is zero-maintenance i18n for the AI era.

Built-In Multilingual SEO

Going global isn't just about UI text; you need to be found. Lovalingo handles the heavy SEO lifting automatically. We generate multilingual sitemaps and inject the correct hreflang tags and meta descriptions for you. This means search engines can index your Spanish, French, and Japanese versions correctly from day one, driving real organic traffic to your global sites without you lifting a finger.

OpenMark AI

Plain Language Task Wizard

Forget writing complex code or JSON configs. You just type out what you want the AI to do, like "extract the invoice total and due date from this messy email" or "write a chill marketing tweet for this new feature." OpenMark's wizard takes your vibe and builds the benchmark. It's the ultimate "explain it to me like I'm five" but for setting up professional-grade LLM tests. No PhD in prompt engineering required.

Real API Cost & Latency Showdown

This ain't about theoretical token prices on a spec sheet. OpenMark makes real API calls to every model and shows you the actual receipt—how much that specific request cost and how long it actually took to come back. You can instantly spot the models that give you 95% of the quality for 50% of the price, or the ones that are weirdly slow. It's all about cost efficiency, not just raw cheapness.

Variance & Consistency Scoring

Any model can have a one-hit-wonder output. OpenMark runs your task multiple times for each model to see the variance. You get to see if Model A nails it 9 times out of 10, or if Model B is a complete wildcard that gives you genius one minute and gibberish the next. This stability check is crucial for shipping something you can actually trust in production, not just a cool demo.

Hosted Benchmarking (No Key Drama)

The biggest flex? You don't need to set up individual API keys for OpenAI, Anthropic, Google, etc., just to compare them. You buy OpenMark credits and it handles all the backend API calls across its massive model catalog. It removes the setup hell and lets you focus purely on the results. It's like having a universal remote for every AI model out there.

Use Cases

Lovalingo

SaaS Founders Scaling Internationally

You've got product-market fit at home and are ready to conquer new territories. The last thing you need is a months-long i18n project slowing your roll. With Lovalingo, you can launch your MVP in multiple new markets over a weekend. Get immediate user feedback from Germany, Spain, or Japan without the traditional dev tax, and iterate fast on a truly global scale.

Agencies Building Client Projects on Lovable

Speed and quality are everything for your agency. When a client needs a site that works in three languages, you can't bill for weeks of manual translation work. Integrate Lovalingo into your standard Lovable workflow. Deliver stunning, fully localized sites to your clients in record time, making your proposals unbeatable and your profit margins much, much happier.

Indie Hackers & Solopreneurs

You're a one-person army wearing every hat. Managing translations manually is simply not an option. Lovalingo acts as your automated i18n co-founder. It handles the entire complexity of going global, letting you compete with bigger players from your couch. Launch globally from the start, capture international demand, and grow your user base without the overhead.

Devs Who Hate Manual i18n Workflows

Let's be real: managing JSON files and string keys is tedious, error-prone, and just not fun. If you have a visceral hatred for that t('button.submit') life, Lovalingo is your liberation. It abstracts all that nonsense away. You just build your UI with plain text, and we make it multilingual. Reclaim your joy for development and spend time on the interesting problems.

OpenMark AI

Pre-Launch Model Selection

You're about to bake an LLM into your app's new support chatbot. Do you go with GPT-4o, Claude 3.5 Sonnet, or a fine-tuned Llama? Instead of debating in Slack, create a benchmark with real user query examples. Run it. In minutes, you'll have data on which model understands your domain best, responds fastest, and keeps your API bill from being absolutely unhinged.

Validating Cost-Efficiency for a Workflow

Your data extraction pipeline uses an expensive top-tier model for every single document. Is that overkill? Use OpenMark to test your extraction prompts against cheaper, smaller models. You might find one that's just as accurate for simple forms, letting you save the big guns for only the complex cases and slashing your monthly costs dramatically.

Checking Output Consistency for Agents

Building a multi-agent system? You need to know if your "reasoning" agent is consistently logical, not just occasionally brilliant. Benchmark the same reasoning task 20 times. OpenMark's variance charts will show you if the agent's output is stable or all over the place, preventing a production nightmare where your agent randomly decides 2+2=5.

Comparing New Model Releases

A new model drops every Tuesday. Does it live up to the marketing for your tasks? Don't just read the blog post. Quickly clone an existing benchmark task in OpenMark, add the new hotness to the lineup, and run a head-to-head. See if it's actually worth switching your integration over to, based on your own real-world criteria.

Overview

About Lovalingo

Alright, vibe coders, listen up. You're crushing it with Lovable, v0, Claude Code, and the rest of the AI dev squad, shipping features at lightspeed. But the second you think about going global, you hit the i18n wall. Manual JSON files? Broken layouts? SEO nightmares? That's the old world. Lovalingo is your eject button. We're the AI-native translation layer that eliminates all that maintenance BS. Think of us as the automatic transmission for your app's global drive. You paste one prompt, and boom - your React or Next.js app speaks 20+ languages, with zero flicker, zero manual string management, and native SEO baked right in. It's built specifically for the vibe-coding workflow, so you can scale to international markets without slowing down your legendary shipping speed. For SaaS founders, agencies, and devs who'd rather build cool stuff than manage translation spreadsheets, Lovalingo is your new best friend.

About OpenMark AI

Alright, let's cut through the AI hype. You're building something cool, you need a brainy LLM to power it, and you're staring down a list of 100+ models like it's a Netflix menu with nothing good. Which one actually works for your thing? Which won't cost an arm and a leg? And will it flake out on you after one good response? That's the chaos OpenMark AI fixes. It's your personal AI model testing arena. You just describe your task in plain English (or any language, really), hit go, and it runs that exact prompt against a ton of different models—GPTs, Claude, Gemini, open-source stuff, you name it—all at once. No juggling a million API keys, no coding a bespoke testing suite. You get back a side-by-side breakdown of who's the real MVP, based on actual cost per API call, speed, scored quality, and—this is the kicker—consistency across multiple runs. So you see if a model is reliably smart or just got lucky once. It's built for devs and product teams who are done guessing and need hard data before they ship. Think of it as due diligence for your AI feature, so you don't end up picking the flashy model that totally bombs on your specific use case.

Frequently Asked Questions

Lovalingo FAQ

How is this different from Weglot or Google Translate?

Weglot is an external script that manipulates your DOM after load (causing flicker), locks you into their dashboard, and charges per word. Lovalingo is a native React library—no DOM hacking, just clean integration. It's built for developers, with unlimited words (fair use) and per-project pricing. It's like comparing a custom engine part to duct tape on your bumper.

Does it work with my static site (Next.js, Vercel, etc.)?

Absolutely! That's where we shine. Whether you're using Next.js App Router, Pages Router, or any React-based static site generator, Lovalingo's render-native approach works seamlessly. We support both path-based (/es/about) and query-based (?locale=es) routing, giving you full control for optimal SEO and user experience on any hosting platform.

What about my existing i18n JSON files? Do I have to start over?

Nope, you can keep your legacy! Lovalingo can be integrated incrementally. You can use it for all new features and pages you build with your vibe-coding tools, leaving your old, manually-translated sections as-is. This lets you modernize your workflow without a scary, all-or-nothing migration.

Can I edit the auto-generated translations?

Yes, you have full control. While the auto-translation is great for getting 95% of the way there instantly, you might want to tweak brand voice or specific terms. Lovalingo provides a simple interface to review, edit, and override any translation, ensuring your app's personality shines through in every language.

OpenMark AI FAQ

Do I need my own API keys to use OpenMark?

Nope, that's the whole vibe! You use OpenMark credits. We handle all the API calls to the different model providers (OpenAI, Anthropic, Google, etc.) on our backend. You just describe your task, pick models from our catalog, and run the benchmark. No key management, no separate bills, no setup friction.

How is this different from reading benchmark leaderboards?

Those public leaderboards test models on generic tasks like trivia or math. OpenMark is for your specific, unique task. It's the difference between reading a car's top speed and actually test-driving it on your commute route. You get results based on your actual prompts, your data, and your definition of "good."

What kind of tasks can I benchmark?

Pretty much anything you'd use an LLM for! Common ones are classification, translation, data extraction, Q&A, summarization, creative writing, code generation, and testing RAG pipelines. If you can describe it, you can probably benchmark it. The platform is built for real-world, task-level testing.

How does the scoring and "variance" thing work?

When you run a benchmark, we execute your prompt multiple times for each model (configurable). We then score each output based on your task's goal. The results show you the average score, but more importantly, they show the spread—like a distribution chart. A tight cluster means the model is consistent. A wide spread means it's unpredictable, which is a huge red flag for production use.

Alternatives

Lovalingo Alternatives

So you've heard the hype about Lovalingo, the slick new tool that auto-translates your React app without the usual i18n nightmare. It's basically your SEO and localization wingman, handling everything from sitemaps to zero-flash translations so you can vibe code on platforms like Lovable without breaking a sweat. But let's be real, you're probably shopping around. Maybe the pricing isn't your vibe, or you need something that plays nice with a different tech stack. Perhaps you're just doing your due diligence before pulling the trigger. It's smart to peek at the other options on the menu. When you're scoping out the competition, keep your eyes peeled for a few key things. You want something that doesn't make your UI flicker like a bad neon sign, actually helps you get found on Google in other languages, and doesn't shackle you to managing a million JSON files. The dream is a setup that just works and gets out of your way.

OpenMark AI Alternatives

So you're checking out OpenMark AI, the slick web app that lets you pit a hundred-plus LLMs against your specific task to see who's actually worth the API call. It's a dev tool built for the crucial pre-launch hustle, giving you the real tea on cost, speed, quality, and consistency before you commit code. People scope out alternatives for all the usual reasons. Maybe the pricing model doesn't vibe with your current workflow, or you need a feature that's still on the roadmap. Sometimes you just prefer a different interface or need it to play nicer with your existing tech stack. When you're shopping around, keep your eyes on the prize. You want something that gives you actual, unfiltered results from real API calls, not marketing fluff. The whole point is to nail down the best bang-for-your-buck model for your exact use case, so prioritize tools that deliver transparent, actionable data on performance and stability.