Ember

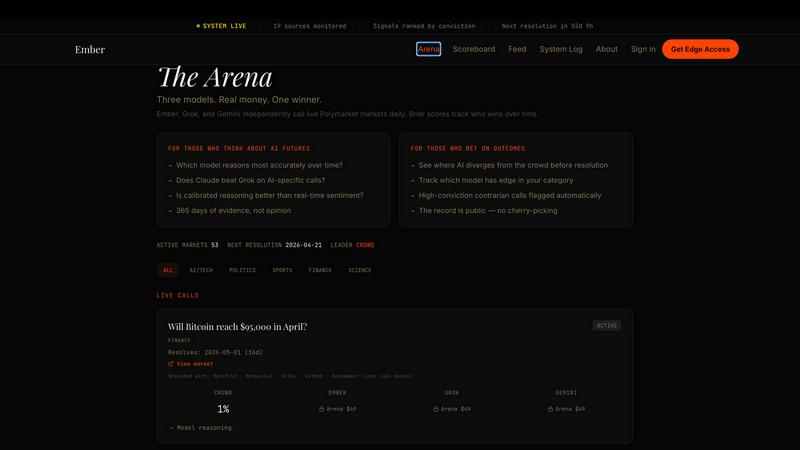

Three AIs call live markets daily, Ember locks their public scores before the outcome so you see who's actually right.

About Ember

Ember is a public AI prediction engine that runs on a brutally simple premise: an AI that won't show its work isn't worth trusting. Every single morning at 7 AM EST, three genuinely different AI models, Claude by Anthropic, Grok by xAI, and Gemini by Google, independently call live Polymarket markets before they resolve. They don't chat, they don't collude, and they don't consult each other. When any of these models disagrees with the real-money crowd by 10 or more points, that divergence gets flagged as a high-conviction signal. Every call is timestamped before the outcome happens, locked forever, and nothing gets edited or deleted after the fact. Accuracy is tracked using Brier scores, a calibration metric that rewards both being right and being confident about it. The model that beats the crowd most consistently across a full 365-day cycle wins bragging rights and credibility. Wrong calls get a full post-mortem breakdown. The entire record builds in public, transparent for anyone to see. Ember is built for degenerate bettors, prediction market degens, AI researchers, and anyone who wants to see whether these models actually know what they're talking about when real money is on the line. It's not a black box. It's a public proof layer that shows you exactly where the edge might be hiding.

Features of Ember

Three Independent AI Models Calling Daily

Every morning at 7 AM EST, Claude, Grok, and Gemini independently analyze live Polymarket markets and assign their own probabilities without any cross-talk. Claude reasons carefully from first principles using prediction markets, bookmaker lines, and AI research feeds. Grok reads live X sentiment and cultural vibes before calling. Gemini grounds every call in live search results and factual verification. They are forced to disagree. When all three agree, that's noted. When they split, that's your signal.

Divergence Flagging for High-Conviction Signals

When any Ember model's probability diverges from the Polymarket real-money crowd by 10 or more points, that divergence is automatically flagged as a high-conviction signal. Either the crowd is wrong or Ember is wrong, and the public record will show which one. This is the core mechanic that separates Ember from every other prediction tool out there. You get to see exactly where the models think the market is mispriced before anything resolves.

Timestamped and Immutable Record

Every single call is timestamped before the outcome happens and locked forever on the public record. Nothing is edited. Nothing is deleted. No retroactive changes, no fudging the numbers, no cleaning up the bad takes. Every wrong call gets a full post-mortem breakdown explaining what went wrong. The record builds transparently across 365 days, and anyone can audit it at any time. This is accountability on a level most AI products are terrified of.

Brier Score Accuracy Tracking

Ember tracks accuracy using Brier scores, which is the gold standard calibration metric for probabilistic predictions. It rewards both accuracy and confidence, meaning a model that says 90% and is right scores better than one that says 51% and is right. This prevents models from gaming the system by always calling close to 50%. The model that beats the crowd most consistently across the full year wins. No shortcuts, no gimmicks.

Use Cases of Ember

Prediction Market Degens Looking for an Edge

If you are actively trading on Polymarket, Manifold, or Metaculus, Ember gives you a second opinion from three different AI models every single morning. When Ember diverges from the crowd by 10 or more points, that is a concrete signal that something might be mispriced. You get to see the call before the market resolves, and you can decide whether to fade the AI or fade the crowd. Real money decides who is right.

AI Researchers Studying Model Calibration

For anyone studying how well large language models actually understand probability and uncertainty, Ember is a goldmine of public data. Three different architectures from three different companies making independent calls on real-money markets with full timestamped records and Brier score tracking. You can analyze exactly which models are overconfident, which are underconfident, and which domains they are actually good at predicting.

Bettors Who Want Transparency Over Black Boxes

Most prediction tools and betting algorithms are complete black boxes. You get a pick, you have no idea how they arrived at it, and if they are wrong you never hear about it again. Ember shows its entire work. You can see the sources each model reads, you can see the reasoning, you can see the divergence, and you can see the post-mortem when a call goes wrong. No trust required when you can verify everything.

Content Creators and Analysts Tracking Market Psychology

If you write about prediction markets, AI, or betting psychology, Ember provides a daily data point on how AI models perceive the same information differently. Watching Claude, Grok, and Gemini disagree on the same market is genuinely fascinating and reveals a lot about how each model processes information. The divergence data alone is worth tracking for anyone analyzing market efficiency and AI reasoning.

Frequently Asked Questions

How does Ember make money if the calls are public?

Ember operates on a subscription model for early access. Subscribers see the signals at 7 AM EST before they are released to the public. The edge is timing. Public release follows shortly after, but subscribers get the information first. The subscription is $29 per month for full access to all divergence signals, locked calls, and the complete record.

What happens if all three AI models agree with the crowd?

When all three models agree with the Polymarket crowd within a few points, no divergence signal is generated. That is noted in the record as consensus. Ember only flags divergence when one or more models disagree by 10 or more points. Consensus is not the goal. Finding where the models think the crowd is wrong is the entire point.

Can I see the actual reasoning behind each call?

Yes, the reasoning is part of the public record for every call. You can see what each model read, what probability it assigned, and when the call was locked. For wrong calls, there is a full post-mortem explaining what went wrong. Nothing is hidden. The entire record builds transparently across the 365-day cycle.

How is accuracy measured and what is a Brier score?

Brier score is a calibration metric that measures the accuracy of probabilistic predictions. It ranges from 0 to 1, where 0 is perfect accuracy and 1 is completely wrong. The formula penalizes overconfidence heavily. If a model says 90% and is wrong, that hurts much more than if it said 55% and was wrong. This forces models to be honest about their uncertainty. Ember tracks Brier scores for each model and against the crowd across the full year.

Similar to Ember

Liners Africa

Yo, Liners Africa is the go-to spot for discovering, comparing, and reviewing 1,500+ African software products like a total pro.

Tailride

Tailride is your AI homie that scans emails and portals to auto-find invoices, saving you hundreds of hours of grunt work.

VolRadar

VolRadar scans 500+ stocks overnight so you can find the best premium-selling setups in 30 seconds flat.

PopPay

PopPay is your go-to for free, easy peasy SARS-compliant accounting that keeps SA small biz thriving without the boring stuff.

StockFit API

StockFit API serves up clean SEC data that's actually ready for modeling, backtesting, and valuation without the usual accounting headache.

Wize Finance Eligibility Check

Check your UK Ltd's biz loan eligibility in 30 secs with zero credit score impact, no cap.

Stockdrifts

Stop researching stocks like a boomer and start crushing it with AI-powered SEC filings, insider trades, and hedge fund intel in one dashboard.

Vendor Space

Ditch the spreadsheet chaos and manage all your event vendors and payments in one seriously slick platform.